Until recently, Cosmos DB’s pricing model was based on a per-collection provisioning. This model was a good fit for single-antity applications using Cosmos as a flow-through data point collection or other narrow well defined collection usages.

But for applications spanning multiple entities with broader usage pattern this could prove cost prohibiting: Each document type or entity would have naturally been designed to go into its own collection. But with the minimum price for the smallest collection around $30 a month for 400RU, an enterprise would think twice before designing an application that used Cosmos as a full back-end because low-usage collection data would start adding up to the solution cost. If nothing else, this pricing strategy flew in the face of the code-first benefits which document oriented databases promise.

Worst, this pricing lead some to use a single collection for all entities of all types. While that move saved money by not over-provisioning collections for small infrequent documents, it fosters no separation of concerns and did not promote separation of concern in code as microservices would have otherwise.

Good News Everyone!

This is all in the past!

Cosmos DB now lets you provision database throughput at the database level. Collections created under that database will then share the cost based on that provisioned RU level. You can still provision specific collections with specific RU to meet your needs. But if you do not, then all the collections in that database will share the provisioned throughput.

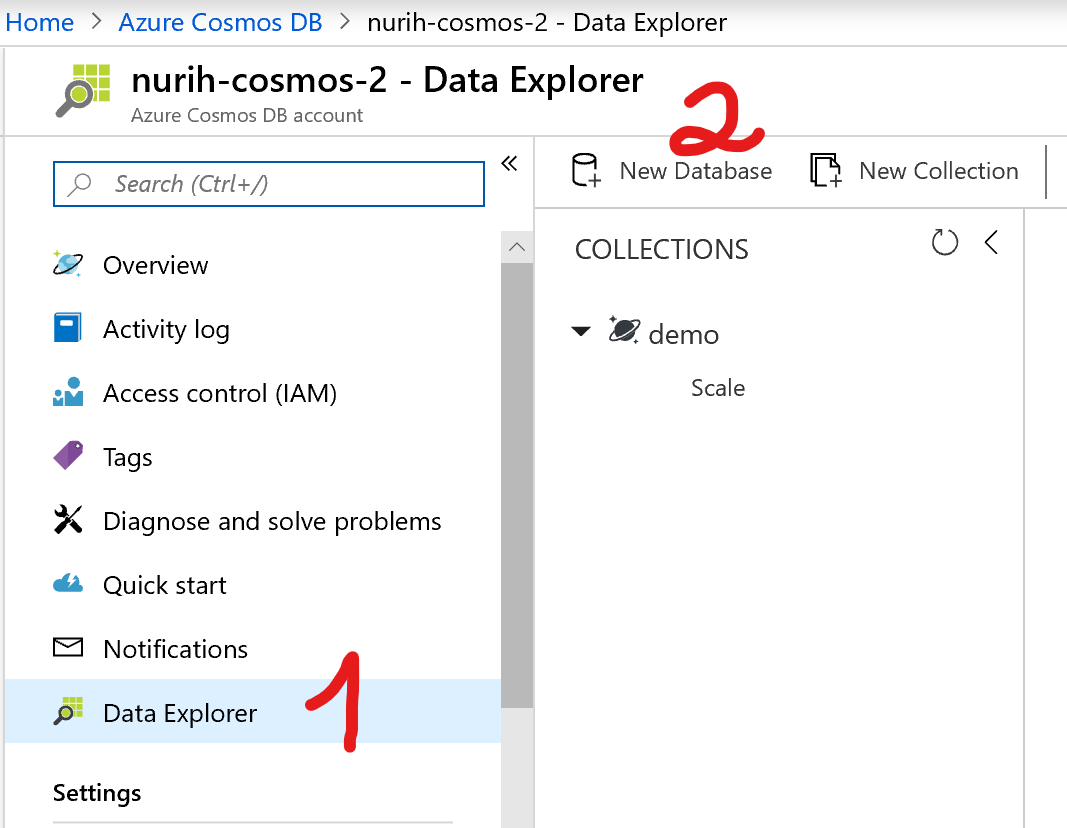

To do this, you will start with creating a Cosmos DB account. Once an account is created, you can go to the data explorer and then hit the “New Database” link at the top.

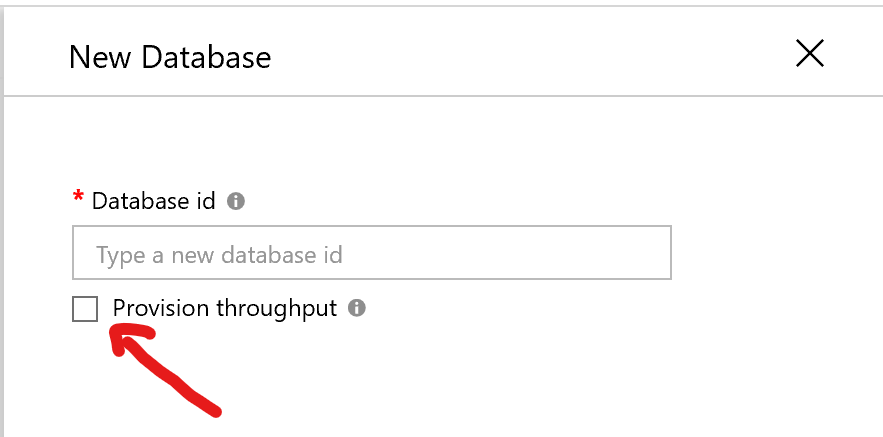

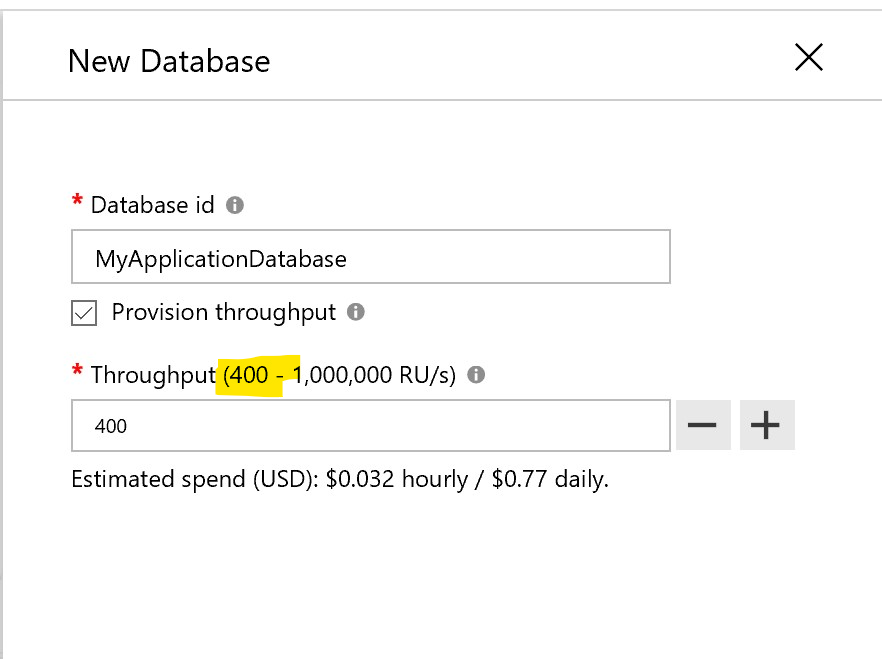

In the dialog, type the name of your database, and check the “Provision throuput” checkbox.

This will reveal a secondary input where you can enter the desired throughput. The minimum provisioning is 400RU, but the default number popping in that box is 1000RU. Choose the throughput level your application demands - that’s something you have to determine on your own.

That’s it! Collections created under the shared throughput will share the cost and throughput at the database level. No more kludge code to force all documents of all types into one collection. No more skimping on collection creation for low-volume / low-frequency data to save a buck. Sharing the throughput let your architect and code your application without weirdness coming from the pricing model.

Kudos to the team that made this possible.