The MongoDB Durability and High Availability AdvantageIn this era of digital transformation, businesses rely on data-driven applications and data driven insights to make informed decisions. The central piece of this is data. Moreover, the unspoken features of the data are widely understood to be threefold: That the data is accurate, that the data is available, and that the data is durable. The following discussion will touch on the last two features. When selecting a database to serve your real-time applications, the reliability and availability of your databases are crucial. How do we acheive that? How do we determine the levels of availability and durability appropriate for our application? Which database provides us with the strategic advantage that drives agility and rapid innovation without forgoing the three key data features? This article will examine the MongoDB database architecture with respect to those questions, and help shed light on some of these aspects.

MongoDB provides increased durability and high availability compared to traditional databasesThis translates into improved data integrity, reduced downtime, and higher system resilience.

About Data durability and high availability

Availability and durability are key concepts that all databases address, or at least should address to be considered for production workloads. Especially in the world of OLTP (Online Transaction Processing), data which is not guaranteed to be durable and available makes for low utility. Before digging into MongoDB’s approach to this, let’s define these two terms:

Data durability refers to the ability of a database system to persist data even in cases of hardware failures, operating system failures. A database system that guarantees durability should have the same data that was available pre-crash completely present and usable post crash recovery. The database system ensures that data is not lost due to abnormal system behavior. If power is lots, a disk is corrupted, the operating system crashes unexpectely, or the database process crashes - data which was written prior to any such event should not be lost.

High availability refers to the ability of a database system to remain accessible and operational, even in the event of hardware failures or network outages. The word “system” in “database system” is key here, since a certain redundancy is assumed here. Whether by having a chassis stuffed with extra hardware (CPU, Memory, I/O bus, disks, power supply, network, etc.) or by enrolling multiple host machines for the database system as a whole, this feature requires redundancy to a degree where no single resource failure causes the system as a whole to stop fulfilling its function. This is typically accomplished through techniques like replication, load balancing, and automatic failover. A highly available database system minimizes downtime and continues to funcion in the face of system component failure.

Traditionaly, relational database systems struggled to provide both strong durability and high availability. While they offer robust data consistency, their high-availability offering was often complex to configure, complex to manage, and often required manual intervention or considerable downtime to stabilize in the face of failure. The bigger the system, the more pronounced this became. This complexity by itself sometime led to failure by itself, increasing - rather than decresing - the risk of data loss and system downtime.

Here are a few examples of traditional database system approaches, with noted risks to durability and high availability.

| Setup | Aspect | Risk |

|---|

| RAID Subsystem | H/A, Durability | RAID subsystem failure, connection failure, enclusure failure. |

| Block Replication | H/A, Durability | Replication lag, data loss, integrity if blocks are corrupt, consistency issues, difficult to determine logical data point of recovery. |

| Logical Replication | H/A, Durability | Replication lag, cross-node consistency. |

| Replication - hot standby | H/A, Durability | Extended failover period, manual intervention, replication lag, non-deterministic data integrity |

| Replication - bidirectional | H/A, Durability | Data consistency, slower writes, complex conflict resolution |

| Backup | Durability | Manual recovery, lengthy downtime, potential data loss |

| Backup | Durability | Manual recovery, lengthy downtime, potential data loss |

Database system designers attempt to address all of these risks to some degree, and have developed remarkable point solutions to specific risks. The question one faces is though: In totality, does my chosen system provide an adequate answer to these risks?

Before moving to how MongoDB’s adresses durability and high availability, a note about ACID. The ACID acronym characterizes desirable system propertis of Atomicity, Consistency, Isolation, and Durability. While durability is a focus of this article, the first 3 terms can use a bit of framing.

The properties discuss a “transaction” - a re-used term which traditionally is interpreted as a form of write-bundling. In that interpretation, read and write operations within a single transaction would either succeed of fail as a unit. When successful, all writes within a transaction become visible “at once” and readers would see them as the new state of data. But in traditional interpretation of these terms, things were quite narrow. Atomicity was focused on a database process writing locally, on a single host. It did not marshal who transactions on a multi-host system or systems with hot-standby replication would apply writes. Consistency was designed to guarantee even less: When a multy byte piece of data is written, it should appear to be written completely. For example if a long string is written into a column in a single row, it would have to be completely written, and not in parts as far as the users are concerned. This therefore had more to do with the integrity of multi-byte data not being interrupted or corrupt. No “half strings”, no long numbers being partially written due to OS time-slicing or any parallel conditions. This has not in any way extended to writing the same data on two hosts. The idea of “Eventual consistency” had to do with the more collaborative clustering techniques modern systems (MongoDB among them) use to deliver a highly available database system. And finally, isolation: The property of a transaction spanning multiple logical operations (say check row condition, write data to multiple rows and tables, etc). Again, this was designed as a property of a single node database, and protects data within a transaction from parallel operations by other users at the same time. It’s the notion of a view of the data which is maintained by the system to support the atomicity: not to expose effects of transactions not yet commiteted to others, and not to be affected by neighboring transactions of others during the execution of our own transaction.

When more modern multi-node database systems came on the scene, attempts to fault them for not being “ACID Compliant” imposed the notion that single-node ACID guarantees would magically extend to multi-node clusters. Indeed, distributed locking and other pessimistic concurrency mechanisms did emerge, but were typically to slow on write and are largely disfavored. The understanding of “Eventual Consistency” have evolved thankfully, and now we are talking about applications being able to have consistent reads rather than insisting that replication magic would alwas ensure any piece of data written to the system is syncronized under lock. Without this understanding, it’s hard to discuss why and which databases fulfil on ACID and on consistency.

MongoDB’s Durability Mechanism

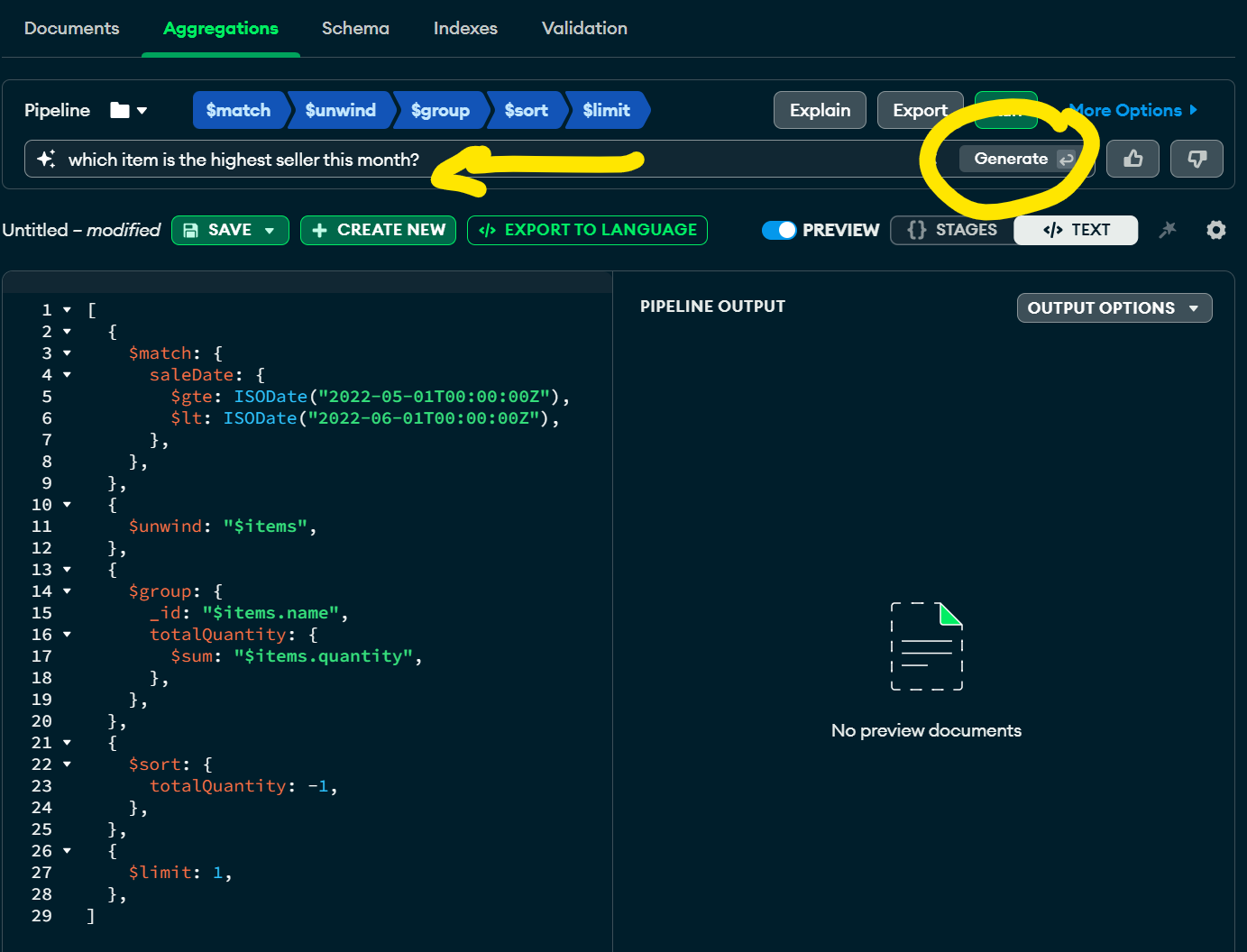

The ability to have data survice unforseen crashes and various failures in MongoDB relies on the internal journal mechanism, whereby writes are applied first to disk in raw form and then processed later into the main storage blocks. This ensures that if the host crashed before data made it to the main storage, the transactions are recovered from the journal upon re-start, and applied before the database is open for any operation.

Once data is on disk - whether in journal or main storage form - it is considered durable. But this is just the beginning. In a replica set cluster, MongoDB further applies those writes to other members of the cluster, thereby protecting not only against temporary crash, but also from complete, irrecoverable failure of a host (or even several hosts). Having the data distributed among several different hosts allows MongoDB to offere higher durability than any single-instance database system, especially when the cluster hosts reside in different geographical locations.

Insofar as transactional isolation, MongoDB supports multi-document transactions (and has always supported single-document transaction isolation too). You may read more about transactions in the official documentation, especially atomicity and isolation.

The journaling mechanism allows customers to pick the host and cross-host transaction consistency levels. At minimum, applications can send un-acknowledged write operations. This would be fast, but does not guarantee durability. In face of an error, the application would not have received any guarantee that the operation carried out. From there on, write concerns of j or w:majority would ensure that the writing application would receive an acknowledgement only after data has been locally applied to the journal, and therefore would withstand a crash.

But as mentioned, MongoDB can extend the durability guarantee beyond a single host, by using a Replica Set configuration - something traditional database systems struggle to provide.

The MongoDB Replica Set

A replica set in MongoDB is an arrangement of 3 or more hosts, where one of the replica set’s members is writable at any given point in time, and other members mirror the data from that writable member. In case of failure of the primary member, a secondary node takes over the primary role quickly, automatically, and without manual intervention.

The data replication mechanism relies a special linarized log of logical write operations called the OpLog. Every write received by MongoDB includes an entry into the OpLog as well as the Journal. Operations in the OpLog are written in the sequence in which they were applied to that server. Therefore, any secondary member reading and writing them locally in the same order would be guaranteed to end up with the same exact state the primary had when applying that write.

With this mechanism, MongoDB can now guarantee a higher level of durability. A write which is sent over to the system with a write concern of w:majority and is acknowledged by the primary guarantees that the data already resides elsewhere, at a majority of secondaries and not just on one server. At that point, a catastrophic complete and irrecoverable loss of the primary would still not lose our data.

MongoDB employs a single-writable node in a replica set, meaning that applications must connect and write to that primary. But applications do not have to implement monitoring or connection logic. All official software drivers provide cluster discovery, connection, connection pooling, and routing out of the box. When failure occurs for a primary node, a secondary node becomes primary, and applications are largely oblivious to that fact. In cases where an in-flight write has been interrupted due to primary failure, applications will receive an error and can re-try their transaction again, successfully once a new primary is available. The whole failover can take seconds. Traditional hot-standby cutovers and other recovery systems in RDBMS would take tens of seconds, minutes, or even require manual intervention. This level of high availability is much more difficult to maintain or even achieve.

Eventual Consistency

At this point the concept of Eventual Consistency typically comes up. This entails the observation that at some point data may be written at the primary and be visible at the primary, but is not yet visible or even present at some secondary node. Luckily - by design actually - MongoDB has addressed this with what is called a Read Concern. A Read Concern is an mode that an application sends along with commands, instructing the replica set node to only consider certain data while processing. Specifying a Read Concern of majority ensures that mongo will reply to a query only with data which has already been acknowledged by the majority of nodes. This guarantees data is both durable and highly available. For data written with Write Concern w:majority, this reciprocal Read Concern provides a “read your own writes” behavior which generally satisfies consistency needs across the board.There are several Read Concern levels, and nuances regarding transactional isolation levels, but the general idea here is: Yes, you can have consistent reads with MongoDB. Surprisingly though, not all NoSQL systems - let alone RDBMS - provide this level. Multi-primary (or bi-directional replication) databases don’t always allow for this. Hot-standby and replication arrangement at the storage level struggle to provide this too. Some systems boast speed, yet fail to update indexes at the same time as the writes - causing furhter conusion attempting to fulfill on consitency.

Summary

MongoDB’s Replica Set mechanism provides both consistency and high availability not common in other distributed database systems. Further, this mechanism provides stronger durability and availability than any single-node database can provide. It therefore offers organizations an attractive database solution that can support the most demanding needs.

]]>